Tapping the Matrix:

Harnessing distributed computing resources

using Open Source tools

Carlos Justiniano (cjus@chessbrain.net)

ChessBrain, msgCourier Projects

Good morning and thank you for attending this presentation.

My name is Carlos Justiniano and I'm the founder of the ChessBrain.net distributed computing project and the new open source msgCourier project.

I'm here today to speak to you about how open source tools are making it possible for individuals to harness distributed computing resources. I have a lot of material I'd like to share with you today, so please save your questions until after this presentation. I'll be available throughout the day if you'd like to approach me, or you can send me an email at the address shown behind me.

During the past six years I’ve been involved with public distributed computing projects. Four years ago Dr. Colin Frayn and I began work on a project called ChessBrain. ChessBrain is a distributed computing project which plays the game of chess using the processing power of Internet connected machines.

In January 2004, right here in Copenhagen, ChessBrain set a new Guinness World Record involving distributed computation. So I'm pleased to have this opportunity to speak to you here today.

After the event, I returned home and began working on an article for the O'Reilly OpenP2P site, entitled "Tapping the Matrix". I wanted to share what I had learnt during the past few years and an introductory article seemed like a good way to do that. The article explored how distributed computing projects work and how open source tools and open standards were playing their part.My talk today is based on the earlier article for O'Reilly and two new papers which were prepared for this conference. I'll provide links to where you can download the papers at the end of this presentation. In this session, we'll explore how the field of distributed computing has evolved over the past few years. Before we dive in, I'd like to briefly examine how publicly volunteered computing projects are different from isolated research projects and those involving the use of Grid platforms. With volunteered computing, it is the general public which provides the necessary computing resources required to achieve a common goal.

Project organizers have little to no control over the availability and reliability of remote systems. In contrast, Grid systems are specifically designed to offer control and predictability. However, the two methodologies are not mutually exclusive!

Volunteered computing systems can compliment and extend the reach of Grid systems into the homes of public contributors. In this presentation I'll focus on volunteer computing - however - it will be easy to envision how Grid systems and public computing can come together using Open Source tools.

The Real Matrix

- In the blockbuster movie The Matrix, the machines found a way to harvest human beings.

- Today it's quite the opposite.

In the blockbuster movie, The Matrix, the machines found a way to harvest the bioelectric energy of humans who are enslaved and grown in pods. Like all good science fiction, the concept requires a stretch of one's imagination. However, today there is an inverted parallel -- humans are harvesting the processing power of millions of machines connected to a matrix we call the Internet.

Machines are underutilized

- Modern machines are quite capable!

- Most are underutilized.

- Even when utilized - most machines use very little of the CPU.

Modern machines are capable of executing billions of instructions in the time it takes us to blink. Consider the power of modern desktop systems and take a moment to consider the machines you have at home, school or at the office. Consider what those machines might be doing right now. The truth is many machines are idle for as much as 90% of an entire day. Even when active, most applications utilize fewer than 10% percent of a machines CPU. Certainly there are applications which can sustain 60-80 percent CPU utilization; however, with the exception of research applications, and enterprise production systems, most desktop systems found throughout the world are underutilized. The company I work for has a work force of over 30,000 employees. Most employees have one to two machines at their desk. Most of those machines are always on, because our IT department monitors the machines and applies software updates overnight. Many of our employees have high-end desktop systems sitting idle at home while they're at work. This situation isn't new or uncommon.

The situation hasn't been ignored!

- In 1997-98 several DC projects were formed

- SETI@home becomes the most famous

- Today dozens of DC projects exists

For researchers, the lure of harnessing spare computing cycles has been simply too good to pass. In early January 1996, a project known as, The Great Internet Mersenne (Mur-seen) Prime Search (also known as GIMPS), began an Internet distributed computing project in search of prime numbers. A month later the project reported the involvement of 40 people and about 50 computers. A year later the number grew to well over 1000.

The following year, in 1997, Earle Ady, Christopher Stach and Roman Gollent began developing software that would enable network servers to coordinate a large number of remote machines. An initial goal was to compete in RSA’s 56-bit encryption challenge. As many of you know, encryption schemes are susceptible to brute-force attacks given sufficiently powerful hardware. And so, the group was going to need a considerable amount of hardware to tackle an encryption key space consisting of 72 quadrillion encryption keys.

By mid-1997, the group became formally known as distributed.net, and by October they discovered the correct key to unlock the RSA challenge message which read: “The unknown message is: It’s time to move to a longer key length”. In October, the New York Times published an article entitled, “Cracked Code Reveals Security Limits” and the use of volunteer computing one again appeared on the public's radar.

While both GIMPS and Distributed.net’s efforts achieved public recognition, another project would go on to become a household name in distributed computing circles. In 1998, a group of researchers at the University of California in Berkeley launched the SETI@home project. The project employs Internet-connected computers to aid in the search for extraterrestrial intelligence. SETI@home captured the public’s interest and grew to several hundred thousand contributors. In 1999, a year after the project was founded, I began participating and have since contributed over 43 thousand hours of CPU time over the past five years. The project’s success brought with it a significant amount of computing power as thousands of other people discovered the project.

In 1998 project leader and researcher, Dr. David Anderson compared SETI@home’s distributed computing potential to the fastest computer at the time, IBM’s ASCI White. IBM built the 106 ton, $110 Million dollar system for the U.S Department of Energy. The supercomputer was capable of an impressive peak performance of 12.3 TFLOPS. Anderson wrote that when compared to IBM's ASCI White - SETI@home was faster and cost less than one percent to operate.

Naturally, the cost savings are due to the fact that SETI@home’s computations are distributed and processed on remote machines - machines which are paid for, operated and maintained -- by the general public. GIMPS, Distributed.net and SETI@home are still active today. GIMPS, the longest running distributed computing effort, recently discovered the existence of a prime number consisting of over seven million digits! BTW, Prime numbers have important uses in the field of cryptography. They're used in public key algorithms, hash tables, and pseudorandom number generators.

In addition to the 1997 RSA 56-bit challenge, the Distributed.net project has successfully completed a number of other encryption challenges. While SETI@home hasn't found ET, it has been instrumental in raising the public’s awareness of distributed computing efforts. During the past few years the SETI@home group has also created the Berkeley Open Infrastructure for Network Computing platform (also known as BOINC) which is already helping to launch new distributed computing projects. We’ll take a brief look at BOINC later in this presentation.

GIMPS, Distributed.net and SETI@home are not alone. Today, the O'Reilly OpenP2P Directory and The AspenLeaf DC web site list dozens of active projects.

Resources Exist Behind Locked Doors

Distributed computing resources can be found in many locations. However, each location has at least one thing in common. People. People, control access to these resources. To paraphrase the Matrix: They are the gate keepers. They are guarding all the doors; they are holding all the keys.

Resources Exist Behind Locked Doors

One to many machines at home

- Connected directly to DSL/Cable modem

- Connected to network switch to DSL/Cable modem

- Each machine is capable of independently communicating with the outside world

Conservative estimates indicate that there are roughly 800 million personal computers in use. About 150 million are Internet connected machines which are expected to increase to one billion by the year 2015. Today, many homes contain more than one machine, often connected via a private home network. In addition an increasing number of machines are connected via broadband, always on, connections.

Resources Exist Behind Locked Doors

Garage farms and clusters

- Multiple machines forming a server farm

- Often used to participate in one or more DC projects

- Each machine typically has Internet access

Some computing enthusiasts go so far as to build and operate their own clusters. The numbers of people who actually do this are larger than you might think! There exists an Internet sub-culture of enthusiasts who refer to themselves as DC'ers. They form and participate in distributed computing teams some of which consists of thousands of individual members.

Resources Exist Behind Locked Doors

Institutions: Businesses, Universities

- Multiple machines tightly secured

- Controlled availability

- Existing server farms and clusters

- Some have existing GRID Computing environments

Naturally, a great many machines exist in businesses and universities throughout the globe. Not surprisingly these machines are often tightly secured and controlled. However, people control access to these machines and they often grant access by allowing their machines to run well behaved distributed computing software. At first thought, it may seem unlikely that large institutions would grant access to a significant number of machines. I can assure you that this is far from unlikely! On the ChessBrain project we received offers from groups hosting 100-1000 node clusters from locations such as the US, Germany and right here in Copenhagen. The key idea behind volunteered computing is that there exists a wealth of distributed computing resources, and under the right conditions, the people who control them are actually willing to share their resources.

The Volunteer Computing Model

- Distributed Computing -> Public Computing -> Volunteer Computiing

- Requires active volunteers

- Implemented as client / server applications

- The client component makes the model unique

The term “Distributed Computing” is well recognized by practitioners and participants alike; however, the term has lost its once specific meaning. The general media now uses the term to describe web services and service oriented architecture (SOA) solutions. A newer term is necessary in order to differentiate the form of distributed computing which we’re considering in this presentation from the now overused term. Distributed computing has been referred to as “Grassroots Supercomputing” and “Public Computing” however those terms fall short of what people actually do when they participate as part of a distributed computing project. The inescapable fact is that in order for distributed computing projects to work they must have volunteers.

So, the term “Volunteer Computing” has emerged to describe distributed computing projects where volunteers supply the necessary computing resources. Most volunteer computing projects (with the exception of a small few) are implemented as client-server applications. The vast majority of volunteer computing projects do not rely on the client-side existence of runtime frameworks such as Java or .NET (although this will likely change).

End users are required to download and install software on their machines. This is what distinguishes a volunteer computing project, where participation is “active”, from the relatively passive use of web-enabled services. The presence of an active role is why volunteer computing is often referred to as Peer-to-Peer computing rather than client-server from a traditional web-centric point of view. These important distinctions introduce additional levels of complexity which we’ll examine in greater detail.

The Volunteer Computing Model

Public computers support project servers

Let’s take a look at how volunteer computing projects actually work. Fundamentally, users download and install a relatively small client application which is capable of communicating with project servers to retrieve individual work units. Each unit of work contains the data - and in some cases - the instructions that a client node can use to process the work. Upon completion the client application sends the results of the work unit to a project server. A project server is responsible for collecting results and performing any post processing that may be required. Compiled results are stored in backend databases. A project server may in turn use the processed results to determine how to generate newer work units for subsequent distribution.

The Volunteer Computing Model

Client-side considerations

- Developers must create well behaved client-side applications

- Native support for multiple platforms: Windows, Linux and Mac - in that order

- Software must navigate firewall and proxy servers

- Secure communication is essential

When an end user agrees to run a piece of software on his or her machine it is because at some level the user trusts that the software is implicitly safe and well behaved. A significant obstacle for volunteer computing project developers is the creation of native client-side applications. Not only must the software perform the primary task (the remote computation) but it must do so without requiring additional preinstalled client-side software. The reason for this is because it’s important to streamline the end user experience of acquiring and operating the project software. Otherwise a usability barrier will be created, and the-would-be project participant may leave in search of easier projects.

The challenge for project developers is to create applications which are relatively self-contained in an easy to download and install package. Additionally, developers must choose which native platforms to support. Because volunteer computing projects typically require an extensive number of participants - the need to support the Microsoft Windows platform is somewhat inescapable. Most project developers choose to support MS Windows, Linux and Apple OSX - in that order.

Further complications are presented when the developer realizes that the client-side application must include the ability to navigate firewall and proxy servers. Additionally, communication must be secured using encryption in order to ensure that the information sent to project servers has not been tapered with. In contrast, project developers have a great deal of control over the choice of backend solutions - however, for the client-side aspect - this is certainly not the case. The introduction of the client-side component presents a significant hurtle. Fortunately, comprehensive solutions have emerged in recent years.

Open Source Tools

- Open Source tools are playing a vital role

- Decreasing costs makes it economically feasible

- The community supports researchers

Not surprisingly, Open Source tools are playing a vital role in the development of volunteer computing projects. The decreasing costs of commodity hardware, coupled with the free availability of highly capable software, have made it not only possible, but also economically feasible. Economics however is only one of many reasons. The strength of the open source community has given intrepid researchers the sense that they are far from alone in their efforts.

Open Source Tools

LAMP Building Blocks

- Great:

- Server-side development is well understood by the community

- Not so great:

- Client-side application and portability can be challenging

The GNU/Linux, Apache, MySQL and P-scripting language tools form the building blocks for a great many open source projects. While GNU/Linux itself is predominantly represented in the LAMP acronym, it is in fact replaceable by other fine operating systems. The use of Apache, MySQL and a scripting language such as PHP, Perl or Python enable developers to create web accessible services. Because most volunteer computing projects are client/server applications, the LAMP toolset is ideal for developers who wish to construct their own solutions - but don’t want or need to build the underlying software infrastructure.

The challenge in using LAMP based tools is that the project developer must still consider how to tackle the client-side application. One approach, which we’re adopting for ChessBrain, is to use a cross-platform development tool such as WxWidgets and GNU g++ to simplify the creation of Windows, Linux and Mac GUI applications. The solutions we examine next embrace LAMP tools to varying degrees.

Open Source Tools

Jabber

- Great:

- Mature Open Source project

- Well supported

- Message based methodology - ideal for distributed computing

- XMPP protocol offers security

- A wealth of freely available source code

- Not so great:

- Complete dependency on XML

- May not be ideal in cluster environment

- Not designed for clusters out-of-the-box

- learning curve required to build custom applications

Jabber was first developed in 1998 by Jeremie Miller as an open source Instant Messaging system and viable alternative to propriety IM solutions such as the AOL, ICQ, MSN and Yahoo messengers. Over the years the underlying Jabber protocol was formalized into the Extensible Messaging and Presence Protocol (XMPP). Today, XMPP is endorsed by IBM, Google and many others. Jabber quickly outgrew its humble IM beginnings to embrace XML based messaging.

Because, volunteer computing is largely concerned with message based communication between servers and clients, the use of a messaging server is quite natural. The Jabber community has developed both server and client-side software which is freely available. The existing code base offers project developers a significant advancement in creating their own volunteer computing solutions based on Jabber. Furthermore, XMPP is a secure XML streaming protocol which addresses many of the issues relating to secure communication.

The only disadvantage with Jabber which we’ve identified is the dependency on XML and TCP. However, when XML is properly utilized the disadvantage may become slight and even insignificant.

Open Source Tools

GPU

- Great:

- Open Source project

- Plugin architecture

- Project is well underway

- Active project on SourceForge.net

- Not so great:

- Implemented in Delphi/Kylix a Pascal dialect

- Limited reach of machines (about 2000)

- Sits on top of Gnutella, which may be blocked in many environments

The Global Processing Unit (GPU) project is a framework for distributed computing over the Gnutella P2P network. GPU developers decided to build their framework using the propriety Borland Delphi rapid application development environment (featuring a Pascal dialect). This presents an obstacle for developers who don’t know Pascal or haven’t looked at the language recently.

In addition, GPU relies on the Gnutella P2P network for communication services such as peer and resource discovery. A consequence of this decision is that many businesses and universities block Gnutella network traffic, whereby limiting the number of potential project contributors. GPU’s founder Tiziano Mengotti also points out that the use of Gnutella limits the available number of machines to about 2000. This can be a severe limitation for projects requiring thousands of machines for effective problem decomposition and distribution.

We believe GPU is an intriguing project with obstacles that may be overcome with some effort. The project is actively exploring P2P grid concepts and is certainly worth a look.

Open Source Tools

BOINC

- Great:

- Designed specifically for use by distributed computation projects

- Server architecture is well documented

- Client applications exists for multiple platforms

- Software is actively used by existing projects

- Not so great:

- Server restricted to Unix environments

- Not designed for clusters out-of-the-box

- Clients written to work with HTTP

- Open Source. Both client and server software is freely available.

- Secure client/server communication.

- Built on top of LAMP tools.

- Well defined application programming interface for developers of both client and backend server applications.

- End users can chose to participate on one or more volunteer computing projects.

- Name brand recognition of having your project recognized and loosely associated with both BOINC and other high-profile projects.

For developers unable or unwilling to invest in creating custom solutions there is the Berkeley Open Infrastructure for Network Computing (BOINC) project. BOINC was created by the SETI@home group. Today, the framework is used by a growing number of high profile projects. BOINC project contributors led by Dr. David P. Anderson (with the Space Sciences Laboratory at the University of California at Berkley); have leveraged their experience on SETI@home to create a generalized solution intended to meet the needs of most project developers.

Consider the following benefits:

BOINC is a solution that is ready for use today. The solution is well documented and well worth a closer view. BOINC provides source code for building client-side applications. End users download a one to six megabyte file onto their machine in order to participate on one or more volunteer computing projects. On the backend server-side BOINC consist of several ready-made server components which communicate with one another and a MySQL database server. The Apache web server is used with FastCGI and Python to create web interfaces.

BOINC provides source code for building client-side applications. End users download a one to six megabyte file (size depending on their target platform and project specific additions.) onto their machine in order to participate on one or more volunteer computing projects. On the backend server-side BOINC consist of several ready-made server components which communicate with one another and a MySQL database server. The Apache web server is used with FastCGI and Python to create web interfaces.

BOINC Architecture

As a BOINC project developer one is only responsible for creating the client-side and server-side behavior that addresses a specific project’s needs. The BOINC framework contains clearly designated areas where one is responsible for adding project specific code modules. The development platform consists of GNU C++ on the server side, and GNU C++ or Microsoft Visual Studio / .NET on the client side. The server environment is expected to be a Linux box with MySQL, Apache and Python installed. The BOINC server side solution utilizes the MySQL database server to store and retrieve project specific information such as work units, results and user accounts. This diagram shows a user interacting with the BOINC system. The client side components are shown to reside in the user’s machine.

Below the client-server separator line we see the components of the BONIC backend. The two bold boxes represent the location of client and server project specific modules. As you can see, BOINC provides a great deal to the project developer. I feel compelled to point out that BOINC’s advantages will very likely outweigh the disadvantages we’ll list in this next section. Keep in mind that BOINC is evolving to meet emerging needs. Visit the project website to learn of new developments.

During the development of the new ChessBrain II project we identified the following issues while investigating BOINC.

- Despite BOINC’s tool support, project developers need to understand and feel comfortable with a number of technologies in order to gain control over their project. In short, BOINC doesn’t yet offer out-of-the box supercomputing - although, at this time it comes closer than any non-commercial tool in existence.

- Complete reliance on LAMP excludes MS Windows environments.

- The BOINC project is structured in the traditional view of client / server architectures. We feel that a P2P view is the emerging future of volunteer computing efforts.

- Uses XML over HTTP which may result in larger communication packets than some project developers may desire.

- End user machines are seen as compute nodes. We feel that volunteer computing projects will need to increasingly embrace server farms, Beowulf clusters and Grid systems.

According to project leader Dr. Anderson, BOINC was specifically created for scientists - not software developers and IT professionals. The goal of BOINC is to enable scientists to easily create volunteer computing projects to meet their growing needs for computational resources. Toward this end, BOINC is a remarkable achievement which will continue to have a profound impact on the future of scientific research.

The ChessBrain II Project

I'd like to speak to you a bit about the ChessBrain project and share with you some of the exciting developments we have planned. ChessBrain is a volunteer computing project which is able to play the game of chess against a human or autonomous opponent while using the processing capabilities of thousands of remote machines. ChessBrain made its public debut during a World Record attempt in here in Copenhagen In January 2004, during a live game against top Danish Chess Grandmaster, Peter Heine Nielsen.

What makes ChessBrain unique is that unlike other volunteer-based computing projects, ChessBrain must receive results in real-time. Failure to receive sufficient results within a specified time will result in weaker play. Tournament games are played using digital chess clocks where the time allotted per game is preset and not renegotiable. so while ChessBrain waits for results its clock is ticking.

Our goal on ChessBrain has been to create a massively distributed virtual supercomputer which uses the game of chess to demonstrate speed-critical distributed computation. We chose chess because of the parallelizable nature of its game tree analysis, and because of our love for the game. This makes the project professionally rewarding as well as personally enjoyable.

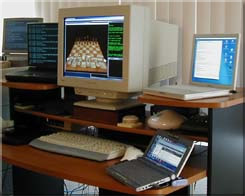

The existing design of ChessBrain features a single Intel P4 3 Ghz machine. The machine hosts a database, the ChessBrain Supernode server software and a chess game server. During the exhibition game against Grandmaster Nielsen the machine was overloaded as it tried to support thousands of remote PeerNode clients. Prior to the event we speculated that perhaps a thousand machines might support the chess match. We were not expecting thousands of machines. Fortunately, ChessBrain made it through the match securing a draw on move 34. We've learned a great deal during and after the event. Our improved understanding is being applied on ChessBrain II.

Perhaps the single biggest change with ChessBrain II is that we're replacing the idea of a single SuperNodewith the concept of clusters of SuperNodes that can consist of several thousand PeerNodes. Our vision is to see SuperNodes establish P2P relationships among collaborating SuperNodes. The software for each SuperNode is being designed to create communities of machines, where each community can consist of thousands of machines.

During the game against Grandmaster Nielsen several clusters consisting of over 200 machines, and a few others consisting of 50-100 machines, participated during the event. At the time ChessBrain wasn't designed to take advantage of clusters - like current volunteer computing projects, ChessBrain was designed for use on individual machines. Over time it became clear that ChessBrain software would have to embrace a wider spectrum of computing environments which include Beowulf clusters, compute farms and Grids.

Our vision for ChessBrain has evolved toward P2P distributed clusters, where volunteer computing enthusiasts are promoted to virtual cluster operators. In the parlance of graph theory our goal has become to create more hubs.

Promoting volunteer computing enthusiasts to virtual cluster operators is non-trivial. If we opted for a BOINC-like framework we would have to expect that each cluster operator would be knowledgeable in the use of open source tools such as MySQL and Apache. This simply isn't practical. On the ChessBrain project our Microsoft Windows based contributors outnumber our Linux contributors two to one. We quickly realized that our new SuperNdoe software would have to be a self contained product which can cluster local or remote machines with ease. The new SuperNode software is being built on top of msgcourier in order to address the challenges we're facing on ChessBrain II.

The msgCoureir is a hybrid application consisting of built-in components that enable P2P messaging for distributed computing applications. The msgcourier is being developed as an open source project which isn’t only intended to support next generation volunteer computing but also a host of other potential applications. In this diagram, each server box with connecting lines will run a msgCourier server.

The msgCourier Project

Powering ChessBrain II

- Great:

- Targeted at Distributed Computing and P2P

- Strong focus on no external dependencies

- Designed to fully leverage Linux and MS Windows platforms

- Built-in monitoring and configuration

- Not so great:

- Not quite ready yet...

The primary goal on msgCourier is to eliminate external run-time dependencies. We chose to build msgCourier using the C++ programming language with initial support for Linux and MSWindows.

msgCourier

We've leveraged a number of free and open source components to create a robust server application. In support of C++ we use the Boost programming library. We added scripting support to msgcourier by embedding the Tcl language interpreter. We use the C++/Tcl project's software to bind C++ and Tcl. for our database needs we've embedded the SQLite SQL engine into msgCourier. We're securing msgCourier communication using the Crypro++ library. for our network monitoring needs we're using the AT&T Research GraphVis graph visualization software. When using these components msgcourier is under two megabytes in size under Windows and about three megabytes under Linux. The cost of using these libraries really amounts to a more complex build process, but eliminates runtime dependencies.

msgCourier

We built msgcourier as a multithreaded application with support for both TCP and UDP based messaging. Messages inside of msgCourier are handled by loadable components called message handlers. The msgCourier server can be configured to route messages to specific handlers on a local machine or to another remote server. This diagram shows a list of components present in the current msgCourier application. The crypto usage box on the bottom right is only currently partially implemented. The msgCourier server has a built-in multi-threaded multiple connection web server component. Application developers can leverage the built-in web server to create their own custom configuration and monitor pages or web based services. The use of HTTP and XML over TCP is optional. However, msgCourier has internal support for HTTP and XML because of their widespread ubiquity and ability to flow through firewall and proxy servers. Let's take a look at a live demonstration of msgcourier.

msgCourier hosted web pages

The DistributedChess.net website is currently being hosted on top of a msgCourier server.

msgCourier hosted web pages

If we point to distributedchess.net and specify port 3400 we're able to access the msgCourier console. Here we see server status information including graphs showing the number of connections per minute and per hour along with the number of messages served during that time.

msgCourier hosted web pages

Below the graphs we see the system event log which allows an operator to peer into the server's inner workings. If we scroll the page we can see an access log showing the type of messages that are being sent and received.

msgCourier hosted web pages

This next screen shows a list of connected Supernodes. Each nodes information can be expanded and collapsed for viewing. msgCourier is currently undergoing its first public review testing. The framework for many of the features we've described is already in place and quickly evolving. We invite you to learn more about msgCourier on msgcourier.com. Keep in mind that its still very early in its development.

Emerging Trends

- P2P will play a greater role

- Beowulf clusters, compute farms and Grids will work together

- The general public will play a larger role

In closing this presentation I'd like to examine emerging trends in Volunteer based computing. We see the volunteer computing landscape evolving in sophistication to meet emerging project needs. A client-server centric view will become increasingly less common as P2P based solutions become the norm. Grid practitioners are already realizing that volunteer computing makes sense for certain aspects of their work. In addition they’re discovering that the economic benefits of leveraging volunteer computing are well worth investigating.

Consider the frequency of which in-house clusters can be upgraded. Compare that to the upgrade patterns and increasing growth and availability of Internet connected machines in the possession of the general public. The exponential growth of the public computing sector is becoming difficult to ignore. Clusters exist throughout the world. Increasingly, clusters are being built and hosted in the homes of computer enthusiasts.

Volunteer computing projects which specifically target clusters have an opportunity to benefit from the computing potential inherent in tightly coupled networked systems. In this approach a node on a local cluster is designated as receiving batches of work units and or instructions for generating local work units from a project server. This local master node is then responsible for distributing work to local nodes whereby leveraging the benefits of local high speed intercommunication. Completed work units are then collected by the master node and sent back to the project server.

This approach eliminates the need for each node in a cluster to individually communicate with a central project server. Thus, this solution makes effective use of the cluster. An increasing trend has become to explore how volunteer computing can allow Grid systems to freely tap the resources available in modern computing households. It’s important to realize that Grid systems while amazingly flexible are still relatively finite in capacity. In contrast, the availability of volunteer computing resources increases based on public interests. A volunteer computing project that captures the imagination and interest of the general public can scale to an impressive amount of computing power in a matter of days. We believe that Grid practitioners will find it increasing compelling to explore extending their Grid facilities using volunteered computing resources.

In the earlier days of Internet distributed computation efforts, researchers realized that the Internet was quickly expanding and making it feasible to leverage remote resources. Today the following factors are helping to change our view of how future projects might be structured.

- Network speeds are increasing.

- The sophistication and interests of computer enthusiasts is expanding. Many are networking machines and exploring volunteer computing projects.

- The number of Internet connected machines continues to grow at impressive increments.

- Commodity hardware continues to increase in speed and storage capacities.

We’re seeing that the sophistication of current computer enthusiasts has reached a point where it has become feasible to consider them in the role of remote cluster operators. The goal here is to distribute the bandwidth and processing loads to remote hubs. In addition, other benefits such as increased fault tolerance may be realized. Volunteer computing software which is capable of P2P capabilities such as self organization will become increasingly common. The vision we share with other practitioners is one of a P2P network of hubs which magnify the network potential of volunteer computing.

This field continues to offer exciting potential - potential that is still only moderately tapped. I hope that you'll consider learning more about volunteer computing projects. In addition if you're interested in starting your own project, contact me and I'll be glad to help in any way I can.

Thank you for your time this morning. I'll open the floor to any questions you might have?

Resources

- Google: BOINC, Jabber, GPU, msgCourier

- Contact: Carlos Justiniano cjus@chessbrain.net

- Latest msgCourier source code posted on msgCourier.com

- Conference papers posted at chessbrain.net